HDR vs SDR Monitor: Was the Test RIGGED???

Share:

HDR or high dynamic range means trying to capture or view as much information as possible from the whitest point to the blackest point of the image. It creates really eye-catching images, so naturally the roll out of HDR gaming monitors generated a lot of incredible hype at CES. Being able to preserve a lot of the highlight information and a lot of the shadow information in games would be amazing. However, I’m here to tell you that gaming HDR monitors aren’t worth it quite yet.

We first got the glimpse of HDR at CES in January, with a side-by-side demo running between an IPS panel on the left and an HDR panel on the right. Based on the images above you would see that the HDR monitor is much better thanks to superior vibrancy, but the dynamic range on both of these looked the same. Fast forward to now an we have actual gameplay comparisons with Mass Effect: Andromeda with the standard picture profile on the left and HDR on the right. I was very curious as to why the picture on the left looked so much worse in comparison, with no contrast and no vibrancy. It was very TN-like, but it’s a super expensive panel. It turns out that when we checked out the monitor settings, they toned down the brightness, the contrast, and chose a different gamma level to make it look worse in comparison to the HDR panel. Therefore, we factory reset the SDR monitor so that the colors and everything looked standard like out of the factory, and the picture quality just came to life. We eliminated all the crippling settings that NVIDIA had set so that we could actually do an apples-to-apples comparison.

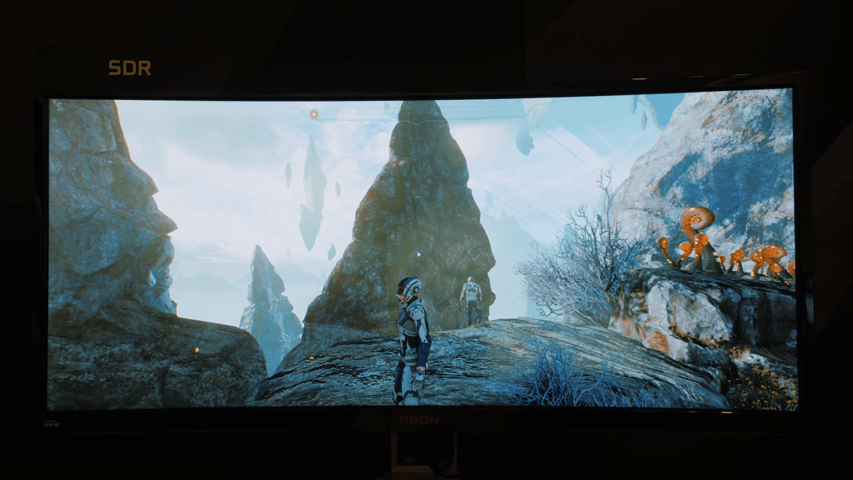

What you are looking at now on the left is the SDR panel after factory reset and HDR panel on the right. The reason why Mass Effect: Andromeda is shown here is because it has a native HDR profile, the game was actually colored with HDR in mind. One immediate improvement I can see is better color definition in the blue sky, it is not as washed out as on the SDR panel. However, the exposure differences in the shadows and highlights really ruined the image for me. The shadows are much darker, the highlights are lower in brightness, and the really overexposed areas that have no color information stick out like a sore thumb.

For example, in this scene the sun is just a white circle, the ground has very little color, and the shadows are poorly visible in the HDR picture. By comparison, the standard monitor handles highlight roll-off more naturally, the ground is properly colored, and generally speaking the whole scene is better exposed.

Here is another example, in SDR this scene looks natural with bright skies, good shadows, and the color is also fine. However, when you move over to the HDR the brighter areas are more visible, but there is a decrease in brightness, so the scene looks flatter and less vibrant, and it’s particularly visible in the red channels as the plants look absolutely muted in comparison to SDR.

The main problem I see with HDR gaming is highlight control, because the fully overexposed elements like fire and lightning look worse compared to SDR because the exposure gradient is harsher. You can spot similar issues with the highlights on top of the Rover and the sun in the image below, as well as the overall dimmer image.

Now one important thing to remember with native HDR content like Mass Effect: Andromeda is that an actual colorist went inside the game and they colored and exposed those scenes appropriately for HDR output. That means that HDR acceptance is slowly rolling out for gaming – which is awesome – but my concern would be inconsistency in visual experience between one HDR title to another. Each individual colorist will go in and will expose the image to how they want it exposed, and they will color grade to what they see fit, and to be frank I did not enjoy the HDR work in Mass Effect: Andromeda.

That’s it for our HDR versus SDR overview. Shame on sneaky NVIDIA for tinkering with those settings to make the SDR monitor look far worse than it actually would with the standard picture profile, that is not cool. However, hopefully our images clearly displayed the differences between both modes. I actually have an LG HDR monitor coming into the studio, so I’m really excited to see how that plays out for non-HDR content. What type of enhancements or drawbacks will I notice with the visual quality? I will definitely be trying out multiple games and media content to see how that whole HDR consumer experience is like.