Not Good Enough….AMD RX 6700 XT Review

Share:

Table of contents

Today is the day before the RX 6700 officially launches, and if you are lucky enough you might be able to get one tomorrow when they go on sale at 9:00 AM PST, once again that is 9:00 AM Pacific Time. Now if you want to know why these cards are so important for AMD you can check out this awesome overview that Dmitri did right over here, but in order for this card to have any success at all it needs three things: Great performance, a competitive price, and probably the most important one is availability. Look it’s obvious by now that even a massive amount of stock won’t be enough since demand is just way higher than supply, but the Radeon team absolutely needs to prove that they can supply more than a trickle of GPUs like they did with the RX 6800 and the RX 6900 series. That launch was a complete disaster in my opinion.

Even if every one of them sells out in the blink of an eye, getting regular resupplies out there is a huge part of winning the battle, and it’s something that they just haven’t been able to do yet. In this review we will answer 1080P, 1440P, and 4K performance, pricing questions, and we will also throw in some overclocking results as well.

Specs

Let’s get right into a quick rundown of the things that you need to know about the RX 6700 XT. First of all, it has 2560 stream processors, which is a pretty major cut compared to the RX 6800, and it’s based on the Navi 22 core. Supposedly Navi 22 is a lot easier to produce in larger quantities than the RX 6800 series Navi 21, and that could lead to more inventory… maybe, but you just never know. There is also 12GB of GDDR6 operating at 16Gbps on a 192-bit wide memory bus and accompanied by 96MB of Infinity Cache. Check out those clock speeds, they are a lot higher than anything AMD has launch so far, which should make up for some of the RX 6700 XT’s stream processor loss. Now in order to actually hit those frequencies AMD needed to push the core to 1.2V, and that ends up leading to pretty high power consumption.

Price

Meanwhile pricing for the RX 6700 XT places it $100 below the RX 6800 and a lot closer to the RTX 3070 and the RTX 3060 Ti. Now a lot of that is because AMD figures that the 12GB of memory will be more appealing than 8GB, especially for folks who are rocking higher resolution monitors. However, that might be a bit of a hard sell since in their own slides the $400 RTX 3060 Ti and 6700 XT trading blows rather than outright domination by AMD. Even in comparison to the RX 5700 XT’s launch price, there is a hefty $80 or 20% premium, so it isn’t a perfectly clear upgrade path either.

Design

Now let’s talk a little bit about this reference card, and personally I think it’s one of the best looking GPUs around right now. Initially it looked like you wouldn’t be able to find this design at normal retailers, but a lot of board partners will be selling this design alongside their custom cards. It’s pretty compact at just 10.4 inches long and it takes up only two slots, so fitting it inside smaller systems won’t be a problem. Even though it consumes almost the same amount of power as the RX 6800 it actually has a smaller internal heatsink and only uses two fans. Now will that end up leading to higher temperatures or increased noise? Well we will see test that in a bit. As for power input, it’s handled by an 8-pin + 6-pin layout. One of the disappointing things is AMD actually eliminated the USB-C port on the rear I/O panel, so now you will only find three DisplayPort 1.4 ports and a single HDMI 2.1.

Power Consumption

Now speaking of power, let’s see how the RX 6700 XT behaves from that perspective. One highlight of AMD’s Navi architecture is it stable power delivery. Instead of a readout filled with peaks and valleys like the RTX 3070, the RX 6700 XT hardly ever hits above its 225W board power, even when it does it’s only by way 1W or 2W. Now bringing those results into a bit more readable charts shows the average power consumption actually being right in line with the RTX 3070, and only a few watts behind the reference RX 6800. Now AMD obviously made some massive strides in performance-per -watt over the RX 5700 XT as well. I hardly ever talk about idle power anymore since modern cards are super efficient in that area, but it’s still something we monitor in every test. I think it’s good that we did here as well because there was something obviously going on with the RX 6700 XT. After we looked into it a bit further I think we found other culprit too. You see were using a 4K 120Hz display and when doing power consumption testing idle power just jumped whenever it was set to that resolution and refresh rate. Lowering it to 60Hz fixed the issue. Either way AMD is now aware of this problem and they should be rolling out a driver fix for it really soon.

Temperatures & Frequencies Over Time

Even with the chip chewing down about 220W and some peaks above 230W the temperatures are still really, really well managed despite the more simplified heatsink design. Remember the worst case hotspot temperature on these cards is set to 110°C and the referenced design doesn’t get anywhere close to that. With a game clock of 2454MHz and a maximum boost clock of 2581MHz under the best conditions, seeing a 2530MHz average means the card is behaving any really predictable way. Now originally I thought that the smaller and more compact cooler 2 instead of 3 fans would cause this thing to be a bit louder than the reference RX 6800, but it actually ended up being quieter. Also there wasn’t any inductor whine that I kept hearing on higher-end Radeon cards.

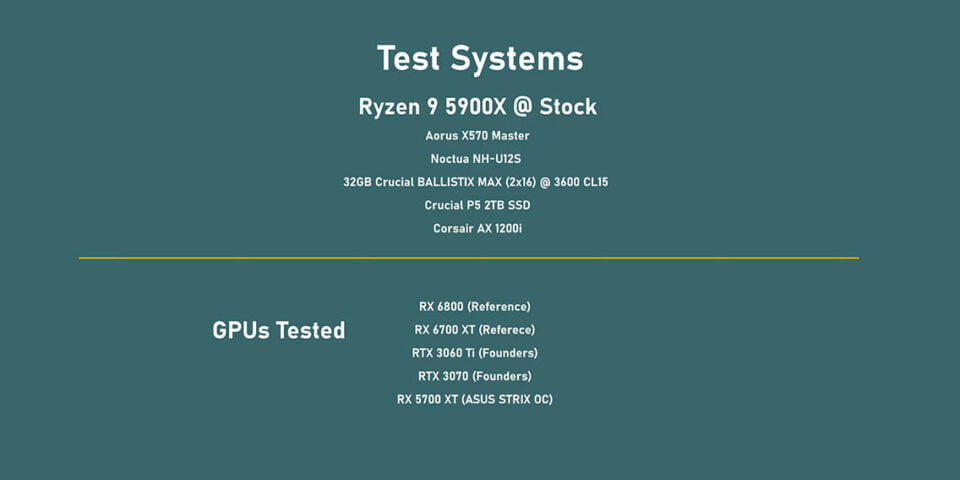

Now that the stage has been said, let’s see how this all translates into performance. Above you can see the specs of test system that we are going to be using this time, but you will notice that some of the results have been updated for older cards since there has been a few game updates that modified frame rates in a few titles. Also, this is the first card that we are testing at all three resolutions, which means 1080P, 1440P and 4K.

1080P Benchmarks

Let’s start with 1080P, and here the RX 6700 XT really isn’t anything special, but that is likely due to the fact that some games like CS:GO and Valorant tend to be CPU limited, which artificially caps frame rates. Even then, this card trades blows with the RTX 3060 Ti rather than the RTX 3070. What you can expect is a good bump over the RX 5700 XT. Now that leads to it having a pretty poor overall value. If your gaming at 1080P, though it doesn’t fare better than the RX 6800. Then again, all of this is only valid if you can actually find one for $480 USD.

1440P Benchmarks

Now this card was supposed to be a 1440P champion, but the 6700 XT ended up showing performance that was all over the place. In some games it dominated while and others it got whipped pretty bad, especially in the 1% lows. The lack of consistency resulted in it narrowly edging out the RTX 3060 Ti on average while being pretty far behind in 99th percentile frame rates. The end result from a value perspective is a bit narrower, but this really does show how the RTX 3060 Ti is almost too good of a GPU. It’s just so far out in front when it comes to delivering on the Dollar-per-FPS front, even with 8GB of memory. But will the RTX 3060 Ti’s 8GB memory limitation finally come up in 4K? Let’s find out.

4K Benchmarks

It turns out that increasing the resolution can make the 6700 XT look a lot better, especially when it comes to the 1% lows, which are now better than the RTX 3060 Ti. However, it’s the lack of consistency that keeps coming up. I mean sometimes the XT beats RTX 3070 cleanly and then in the next game it gets owned by the RTX 3060 Ti. Based on overall results I wouldn’t buy anything under a RTX 6800 or RTX 3070 for 4K gaming. The RX 6700 XT does deliver an okay value here, but it just struggles to justify its overall cost at any resolution though.

Ray Tracing Benchmarks

Ray Tracing performance is as would expect not all that great. This generation of AMD cards really isn’t geared towards high levels of RT, so if you want to run with Ray Tracing on then NVIDIA is just a much better option right now. Personally though, I think in most cases turning the feature on really isn’t worth it unless you use NVIDIA’s DLSS to improve overall performance on games that support it.

Smart Access Memory Benchmarks

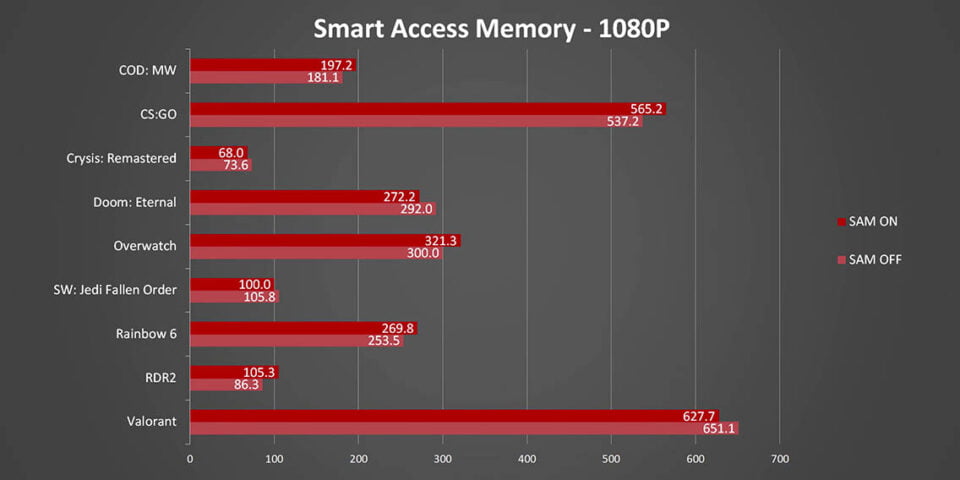

Now that we know the baseline performance I guess it’s time to head into some other areas, starting with Smart Access Memory. Now I need to remind everyone that SAM is only officially available on AMD 500 series motherboards paired with Ryzen 3000 or 5000 series CPUs, so it’s not like everyone will have access to it. Either way, in the games we tested there are some benefits attended at 1080P, but there are also situations where something is obviously not going right and Smart Access Memory ends up hurting rather than improving performance. I’m guessing AMD has only validated it with a handful of titles so far. 1440P shows even smaller benefits or even larger frame rate drop-offs, so I would recommend testing your favorite games with this enabled and disabled just to see which would benefit you more. 4K proves that Smart Access Memory has a diminishing law of returns as resolutions and detail settings increase. Here there are only either identical results or lower frame rates.

Overclocking Benchmarks

When it comes to squeezing a bit more value out of a GPU overclocking can sometimes lead to some pretty big benefits, so let’s see what this little card can actually do starting off with the limits that AMD is putting on it. First of all, AMD is limiting the maximum clock to 2950Mhz, so just shy of 3GHz, but I’m sure there’s going to be a third-party software that allows for more than that. We were able to max thing out on the reference card without any issues. The minimum frequency on the other hand is a lot more picky since it locks a GPU at a minimum speed rather than allowing AMD’s boost algorithms to modulate in real-time. We ended up setting it to a 2600MHz since anything more than that would hard lock the card. Like I mentioned earlier, voltage is set by default to about 1.2V. So in this section it isn’t really necessary until AMD unlocks higher core clock values. I also need to mention that on our sample increasing the power limit didn’t really help a clock speeds in any way, which could point towards voltage being the major limiting factor. On the other hand, we were able to completely max out the memory to just over 17Gbps.

What does this all lead in terms of clock speeds? Well that is where things get really, really interesting. Clock speeds went from an average of 2530MHz up to 2834MHz, which is an increase of just over 10%, and this was done without a massive increase in temperatures or noise either. I mean 10% isn’t a lot, but finding a stable overclock took less than an hour using AMD’s built-in tools so it’s definitely novice friendly.

Typically a 10% clock speed increase will only lead to pretty limited returns, but it seems like the memory overclock is probably the reason behind some of the larger increases. I would say the biggest benefit was that it firmed up those 1% lows really well and allowed a lot better competition with the NVIDIA cards.

Conclusion

The nice thing is that this was all done within AMD’s limits, and if board partners come out with cards or BIOSes that allow for even more headroom the RTX 6700 XT could become a little monster of a card. I guess this all leads me to summing this all up, and I think I’m going to go back to a famous quote: “There is no bad GPUs, only bad prices”. and That is where the 6700 XT lands. You see this isn’t a bad GPU by any stretch of imagination, but AMD is making a big statement with their $480 price point. With a price that high we would expect it to hit near RTX 3070 performance levels, but unfortunately it doesn’t.

Simply put the RX 6700 XT’s performance inconsistencies really hurt AMD here. Sometimes it’s amazing while at other times it gets defeated by the RTX 3060 Ti by a big margin. Now enabling Smart Access Memory does narrow the gap a bit, but only by a few percentage points in the games we tested, and it’s only available for a very narrow range of platforms. I think all of this highlights how the market is going right now, AMD does pretty well in their sponsored games, while NVIDIA does better in theirs, and then in others it’s just a toss up. That is exactly what our results show, but I guess at this point if you’re looking to buy one of these new GPU’s all I want to say is good luck!