NVIDIA GeForce RTX 2070 Performance Review

Share:

The launch of NVIDIA’s RTX series has been a bit of a hit and miss situation. The RTX 2080 Ti and RTX 2080 performing well in benchmarks but their pricing is very, very high for what you get. Meanwhile, the Founders Editions we covered at launch came with steep premiums and the “starting at” prices NVIDIA quoted for both cards still haven’t materialized.

Now we’ve moved onto the RTX 2070 and things are being done a bit differently. You see, NVIDIA has put the burden of this launch upon the board partners’ collective shoulders by insisting they send out samples of cards which have the $500 “starting at” price. The only problem is that NVIDIA’s directive came pretty late in the game and as a result, every partner I spoke to was scrambling to switch production to lower cost cards. Speaking for myself, my samples arrived on a Friday morning with launch on a Tuesday and a flight to Beijing on Saturday afternoon. To say this was all last minute is being generous.

The reason I mention this is because it goes completely against NVIDIA’s typical nature. In this industry, AMD’s Radeon division has the dubious honor of always saddling reviewers with last minute information and samples arriving on short notice. NVIDIA on the other had usually communicates well in advance and the GPU’s arrive with about a week buffer zone before launch. This time we didn’t even hear a peep from the folks at Team Green and it took hours of emails and phone calls to nail down the samples I ended up receiving. What a bloody mess.

OK enough ranting and raving for one introduction. Let’s quickly recap what this new card is all about, starting with the TU106 core. This 10.8 billion transistor die has been supposedly architected for that perfect blend of efficiency, pricing and performance. It actually shares a lot in common with the TU102 since there are a dozen SMs per GPC and in many ways it looks like NVIDIA took their higher end core and simply cut it in half.

There’s actually some interesting things going on here too. Unlike the RTX 2080 Ti which has a ROP partition / GPC ratio of 2:1, this chip has eight ROP groupings and three GPCs. There’s also 4MB of L2 cache which is quite a bit considering how many SMs and Texture Processing Units’ information is being fed into the memory. However, this ROP and cache-heavy design was necessary to ensure the TU106 received the eight memory controllers necessary for a 256-bit GDDR6 bus.

All in all, the RTX 2070 will ship with 2304 CUDA Cores, 144 Texture Units and 64 ROPs. This chip will also likely be used for further cut down version of the RTX series if NVIDIA sees a market for them.

When those specs are put to paper, it looks like the RTX 2070 will have a bit of a hard time of things since, other than the extremely high memory bandwidth, its specs trail those of the GTX 1070 Ti quite significantly. Its “starting at” $500 price point is also higher than what many custom, pre-overclocked 1070 Ti’s are currently going for.

There’s no doubt NVIDIA’s RTX 2070 will run all over the GTX 1070 but there’s that price to contend with once again. Remember, back when there was more competition in the market NVIDIA launched that card at $380 and yet here we are staring down the barrel of a $500 shotgun for its successor. That hurts, especially when the very features RTX buyers are paying a premium for have failed to materialize in their favorite games.

Perhaps a larger issue for NVIDIA is how their RTX 2070 Founders Edition will be perceived by the buying public. It carries a whopping 20% premium over the baseline spec, bringing cost to within spitting distance of an RTX 2080. Provided board partners are able to launch versions at and below $550, I just can’t see gamers gravitating towards this version unless they are absolutely hardcore NVIDIA fans or the cooler design is something they absolutely need to have.

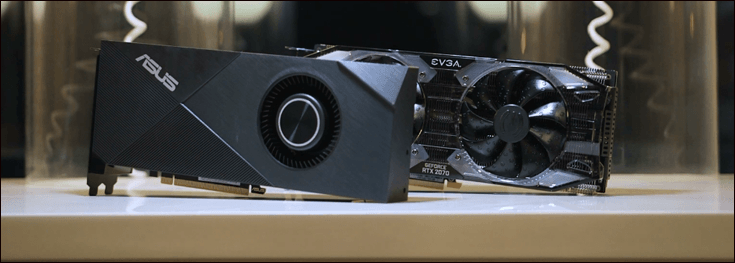

Luckily, just before leaving I ended up receiving two cards that I hope will be somewhat (more on that below) representative of what will be available in the market.

Let’s start with the ASUS RTX 2070 Turbo which happens to be a card that should be retailing for $500. Notice I said “should”? That’s because I’m told these particular blower-style cards will be precious commodities on launch day and likely for some time after that as well. That’s because board partners typically focus upon their premium custom wares rather than these more rudimentary designs.

With that being said, the ASUS Turbo is your typical blower-style setup that insures all of the its hot air is exhausted outside the case. For gamers with small form factor systems, this could be quite beneficial provided temperatures, core speeds and acoustics remain in line.

One interesting addition to this card is the power input which continues the RTX 2080’s tradition of a 6+8 pin layout. With a TDP of 175W, this isn’t strictly necessary but I think NVIDIA is adding a buffer to insure there’s some overhead when those Tensor and RT cores get utilized sometime in the future. Those take up a good amount of die space and will also require no small quantity of power as well.

The other card I received during my last whirlwind day at the office was EVGA’s RTX 2070 XC. I think it happens to represent a perfect example of what many will gravitate towards in the days and months following this launch.

No only has EVGA given this thing a heavily upgraded heatsink when compared against the ASUS Turbo but the XC’s clock speeds equal those of NVIDIA’s Founders Edition. It also happens to cost $550, nicely bridging the yawning gap between the FE and ASUS’ Turbo.

I won’t comment about EVGA’s latest round of GPU design other than to say I’m not much of a fan but plenty of other folks absolutely love the direction they’re going. What I will say is that EVGA should be commended for keeping the XC to 10.6” which is a good inch shorter than their RTX 2080 alternatives.

OK enough of me prattling on but before I switch over to the benchmarks you are all waiting for, you’ll see some interesting additions to the charts. Frankly, Hardware Canucks caught no small amount of deserving flak for testing the RTX 2080 Ti and RTX 2080 Founders Editions against previous generation FE cards. Some folks claimed our testing didn’t necessarily represent the current state of the market. I’m endeavoring to correct that perception by adding a few cards that do reflect the current Pascal offerings: the EVGA GTX 1080 SC2 iCX Gaming, ASUS GTX 1070 Ti Cerberus and ASUS GTX 1070 STRIX 8G OC.

One thing to remember before clicking over onto the next page is that 1000-series cards are either selling out or their prices are seeing rapid increases as stock levels diminish. Sure there are some great deals to be found on every one of the older cards but you’ll need to search them out. And buyer beware of the used market since there are plenty of heavily used ex-mining cards out there without transferrable warranties.

Test System & Setup

Processor: Intel i9 7900X @ 4.74GHz

Memory: G.Skill Trident X 32GB @ 3600MHz 16-16-16-35-1T

Motherboard: ASUS X299-E STRIX

Cooling: NH-U14S

SSD: Intel 900P 480GB

Power Supply: Corsair AX1200

Monitor: Dell U2713HM (1440K) / Acer XB280HK (4K)

OS: Windows 10 Pro patched to latest version

Drivers:

NVIDIA 411.51 Beta (RTX series)

NVIDIA 399.24 WHQL

AMD 18.9.1 WHQL

*Notes:

– All games tested have been patched to their latest version

– The OS has had all the latest hotfixes and updates installed

– All scores you see are the averages after 3 benchmark runs

All IQ settings were adjusted in-game and all GPU control panels were set to use application settings

The Methodology of Frame Testing, Distilled

How do you benchmark an onscreen experience? That question has plagued graphics card evaluations for years. While framerates give an accurate measurement of raw performance , there’s a lot more going on behind the scenes which a basic frames per second measurement by FRAPS or a similar application just can’t show. A good example of this is how “stuttering” can occur but may not be picked up by typical min/max/average benchmarking.

Before we go on, a basic explanation of FRAPS’ frames per second benchmarking method is important. FRAPS determines FPS rates by simply logging and averaging out how many frames are rendered within a single second. The average framerate measurement is taken by dividing the total number of rendered frames by the length of the benchmark being run. For example, if a 60 second sequence is used and the GPU renders 4,000 frames over the course of that time, the average result will be 66.67FPS. The minimum and maximum values meanwhile are simply two data points representing single second intervals which took the longest and shortest amount of time to render. Combining these values together gives an accurate, albeit very narrow snapshot of graphics subsystem performance and it isn’t quite representative of what you’ll actually see on the screen.

FRAPS on the other hand has the capability to log onscreen average framerates for each second of a benchmark sequence, resulting in FPS over time graphs. It does this by simply logging the reported framerate result once per second. However, in real world applications, a single second is actually a long period of time, meaning the human eye can pick up on onscreen deviations much quicker than this method can actually report them. So what can actually happens within each second of time? A whole lot since each second of gameplay time can consist of dozens or even hundreds (if your graphics card is fast enough) of frames. This brings us to frame time testing and where the Frame Time Analysis Tool gets factored into this equation along with OCAT.

Frame times simply represent the length of time (in milliseconds) it takes the graphics card to render and display each individual frame. Measuring the interval between frames allows for a detailed millisecond by millisecond evaluation of frame times rather than averaging things out over a full second. The larger the amount of time, the longer each frame takes to render. This detailed reporting just isn’t possible with standard benchmark methods.

We are using OCAT or FCAT (depending on compatibility) for ALL benchmark results in DX11 and DX12.

Not only does OCAT have the capability to log frame times at various stages throughout the rendering pipeline but it also grants a slightly more detailed look into how certain API and external elements can slow down rendering times.

Since PresentMon and its offshoot OCAT throws out massive amounts of frametime data, we have decided to distill the information down into slightly more easy-to-understand graphs. Within them, we have taken several thousand datapoints (in some cases tens of thousands), converted the frametime milliseconds over the course of each benchmark run to frames per second and then graphed the results. Framerate over time which is then distilled down further into the typical bar graph averages out every data point as its presented.

Understanding the “Lowest 1%” Lines

In the past we had always focused on three performance metrics: performance over time, average framerate and pure minimum framerates. Each of these was processed from the FCAT or OCAT results and distilled down into a basic chart.

Unfortunately, as more tools have come of age we have decided to move away from the “minimum” framerate indication since it is a somewhat deceptive metric. Here is a great example:

In this example, which is a normalized framerate chart whose origin is a 20,000 line log of frame time milliseconds from FCAT, our old “minimum” framerate would have simply picked out the one point or low spike in the chart above and given that as an absolute minimum. Since we gave you context of the entire timeline graph, it was easy to see how that point related to the overall benchmark run.

The problem with that minimum metric was that it was a simple snapshot that didn’t capture how “smooth” a card’s output was perceived. As we’ve explained in the past and here, it is easy for a GPU to have a high average framerate while throwing out a ton of interspersed higher latency frames. Those frames can be perceived as judder and while they may not dominate a gaming experience, their presence can seriously detract from your immersion.

In the case above, there are a number of instances where frame times go through the roof, none of which would accurately be captured by our classic Minimum number. However, if you look closely enough, all of the higher frame latency occurs in the upper 1% of the graph. When translated to framerates, that’s the lowest 1% (remember, high frame times = lower frame rate). This can be directly translated to the overall “smoothness” represented in a given game.

So this leads us to our “Lowest 1%” within the graphs. What this represents is an average of all the lowest 1% of results from a given benchmark output. We basically take thousands of lines within each benchmark capture, find the average frame time and then also parse out the lowest 1% of those results as a representation of the worse case frame time or smoothness. These frame time numbers are then converted to actual framerate for the sake of legibility within our charts.

Battlefield 1 Performance

Battlefield 1 has become known as one of the most popular multiplayer games around but it also happens to be one of the best looking titles too. It also happens to be extremely well optimized with even the lowest end cards having the ability to run at high detail levels.

In this benchmark we use a runthough of The Runner level after the dreadnought barrage is complete and you need to storm the beach. This area includes all of the game’s hallmarks in one condensed area with fire, explosions, debris and numerous other elements layered over one another for some spectacular visual effects.

Call of Duty: World War II Performance

The latest iteration in the COD series may not drag out niceties like DX12 or particularly unique playing styles but it nonetheless is a great looking game that has plenty of action and drama, not to mention a great single player storyline.

This benchmark takes place during the campaign’s Liberation storyline wherein we run through a sequence combining various indoor and outdoor elements along with some combat, explosions and set pieces.

Destiny 2 Performance

Destiny is a game that continues to evolve to suit online gameplay and new game styles but it has always remained a good looking DX9-based title. For this benchmark we use the single player Riptide mission which combines environmental effects like rain, an open world setting and plenty of scripted combat.

Multiplayer maps may be this game’s most-recognized element but unfortunately performance in those is highly variable.

Far Cry 5 Performance

With a beautiful open world but a day / night cycle that can play havoc with repetitive, accurate benchmarking, Far Cry 5 has a love / hate relationship around here. In this benchmark we use the area around the southwest region’s apple orchards but a basic run-though alongside touching off a small brushfire with explosives.

Forza Motorsport 7 DX12 Performance

Forza 7 is a racing game with a very similar heart to the legendary Gran Turismo series and it looks simply epic. It also happens to use a pretty efficient DX12 implementation. For this benchmark we use the Spa-Francorchamps track along with a full field of competing cars. In addition, the rain effects are turned on to put even more pressure on the GPUs.

Hellblade: Senua’s Sacrifice Performance

Hellblade is a game we wanted to feature here not just because of its amazing graphics and use of the ubiquitous Unreal Engine 4 but also because it’s from a small studio and it deals with mental illness. It’s a great game and you should pick it up.

Our benchmark run begins at the beginning of the Fire Realm section and is a simple walkthrough of the various zones within this level.

Hitman DX12 Performance

The Hitman franchise has been around in one way or another for the better part of a decade and this latest version is arguably the best looking. Adjustable to both DX11 and DX12 APIs, it has a ton of graphics options, some of which are only available under DX12.

For our benchmark we avoid using the in-game benchmark since it doesn’t represent actual in-game situations. Instead the second mission in Paris is used. Here we walk into the mansion, mingle with the crowds and eventually end up within the fashion show area.

Overwatch Performance

Overwatch continues to be one of the most popular games around right now and while it isn’t particularly stressful upon a system’s resources, its Epic setting can provide a decent workout for all but the highest end GPUs. In order to eliminate as much variability as possible, for this benchmark we use a simple “offline” Bot Match so performance isn’t affected by outside factors like ping times and network latency.

Middle Earth – Shadow of War Performance

Much like the original Middle Earth – Shadow of Mordor, the new Shadow of War takes place in a relatively open environment, offering plenty of combat and sweeping vistas. The benchmark run we chose starts on a rooftop in Minas Ithil and gradually runs through the streets and vaulting over buildings. A few explosions are thrown in for good measure too. Due to the ultra high resolution textures, even the best GPUs can be brought below 60FPS here.

Rainbow 6: Siege Performance

Rainbow 6: Siege has been around for a while now but it is continually receiving updates and it remains one of the best co-op multiplayer games on the market. Meanwhile, its UHD Texture Pack allows for some pretty great looking unit models and insures even the best graphics cards are brought to their knees.

As with most online titles, we needed to avoid multiplayer due to the high variability of performance and randomness. Instead, the High Value Target mission in Situations was used.

Warhammer 2: Total War DX12 Performance

Much of this game can be CPU-limited but by increasing in-game details to max, it puts a massive amount of stress on the GPU. Unfortunately, after years in beta, the DX12 implementation is still not all that great.

For this benchmark, we load up a ultra high points multiplayer saved battle between the Empire and Skaven. That means plenty of special effects with Hellfire rockets and warp fire being thrown everywhere. We then go through a set of pans and zooms to replicate gameplay.

Wolfenstien – New Colossus Vulkan Performance

This is the only Vulkan game in this entire review and that’s simply because this API just isn’t used all that much. It never has been. With that said, it has allowed id Tech to create an amazingly detailed world while still offering great overall performance.

For this test the Manhattan level is used so we can combine interior, exterior and preset combat elements into one 60-second run.

Witcher 3 Performance

Other than being one of 2015’s most highly regarded games, The Witcher 3 also happens to be one of the most visually stunning as well. This benchmark sequence has us riding through a town and running through the woods; two elements that will likely take up the vast majority of in-game time.

Analyzing Temperatures & Frequencies Over Time

Modern graphics card designs make use of several advanced hardware and software facing algorithms in an effort to hit an optimal balance between performance, acoustics, voltage, power and heat output. Traditionally this leads to maximized clock speeds within a given set of parameters. Conversely, if one of those last two metrics (those being heat and power consumption) steps into the equation in a negative manner it is quite likely that voltages and resulting core clocks will be reduced to insure the GPU remains within design specifications. We’ve seen this happen quite aggressively on some AMD cards while NVIDIA’s reference cards also tend to fluctuate their frequencies. To be clear, this is a feature by design rather than a problem in most situations.

In many cases clock speeds won’t be touched until the card in question reaches a preset temperature, whereupon the software and onboard hardware will work in tandem to carefully regulate other areas such as fan speeds and voltages to insure maximum frequency output without an overly loud fan. Since this algorithm typically doesn’t kick into full force in the first few minutes of gaming, the “true” performance of many graphics cards won’t be realized through a typical 1-3 minute benchmarking run. Hence why we use a 5-minute warm up period before all of our benchmarks.

I was a bit worried that the blower-style card from ASUS would hit some higher temperatures but that didn’t really happen. The max it achieved was 73*C but the EVGA XC’s dual fan downdraft cooler ended up easily beating it with a peak temp of 68*C.

You can see that in order to maintain that 73*C the ASUS Turbo has to fluctuate its clock speeds quite a bit and it ends up running around 1680MHz which is right in line with its specs. EVGA on the other hand is REALLY stable after it settles around 1810MHz.

As you might imagine this small delta between the clock speed of these cards doesn’t lead to all that much more performance. In totally we are looking at about 5% which, in this game’s case, translates to about 1 frame per second.

Acoustical Testing

What you see below are the baseline idle dB(A) results attained for a relatively quiet open-case system (specs are in the Methodology section) sans GPU along with the attained results for each individual card in idle and load scenarios. The meter we use has been calibrated and is placed at seated ear-level exactly 12” away from the GPU’s fan. For the load scenarios, Hellblade: Senua’s Sacrifice at 4K is used in order to generate a constant load on the GPU(s) over the course of 10 minutes.

One of the worries when testing commenced was that the ASUS RTX 2070 card would sound more like a turbine at full tilt rather than the quiet GPUs we have come to expect from the Pascal generation. Luckily, while the fan can be heard as a muted “whoosh” sound, there isn’t anything to be worried about. This is one of the quieter blower-style board partner cards I’ve come across in a while.

EVGA on the other hand…well what can I say? Its quiet as a mouse and won’t be audible over the sound of other cooling devices installed in your system.

System Power Consumption

For this test we hooked up our power supply to a UPM power meter that will log the power consumption of the whole system twice every second. In order to stress the GPU as much as possible we used 10 minutes of Hellblade: Senua’s Sacrifice running static while letting the card sit at a stable Windows desktop for 15 minutes to determine the peak idle power consumption.

Looking at these numbers, I have a funny feeling we can’t consider the Turing architecture to be Pascal-beating just yet. While standard games do show that on a performance per watt perspective the 2070 is superior to a pre-overclocked GTX 1080, one has to wonder what this chart would look like if RTX features were enabled. Remember, those would technically increase consumption further since they lie dormant in non-RTX titles.

With that being said, despite running substantially cooler, the EVGA RTX 2070 does tend to consume a bit more juice. That was to be expected since it is pre overclocked.

Conclusion – A Fresh RTX Perspective?

Well this was certainly an interesting review wasn’t it? From my perspective, the inclusion of custom cards gave a very different and slightly more accurate perspective of the current GPU market. Unless some of the 10—series cards have gone out of stock by the time you read this, unlike when we test reference versus reference, you can actually go out and buy these GPUs. Interestingly, this approach benefited and caused issues for the RTX 2070.

I’ve already mentioned that this launch was completely hooped from the get go by poor communications with NVIDIA, last minute sampling from board partners and an impending trip for the HWC team. But despite all of that, it is great to see the EVGA RTX 2070 XC and ASUS RTX 2070 Turbo being part of the comparison since they are more representative of NVIDIA’s retail strategy than any Founders Edition. Alongside the custom GTX cards that showed their faces, these GPUs allowed for a pretty balanced perspective.

Speaking of balanced perspective, let’s take a look at where the ASUS reference card ends up in the overall scheme of things.

In most games very reference-spec’d GPU basically trades blows with an overclocked GTX 1080 at both 1440P and 4K. On one hand that’s a good thing given the fact these cards are evenly matched from a pricing perspective. However, that also means the RTX 2070 doesn’t bring anything new to the table in the $500 market. Its starting cost is just so high in relation to its direct 1070-series predecessors the only valid comparison point becomes that 1080.

Now talking about those 1070-seies, they do take a back seat to the RTX 2070’s framerates but I have to wonder if that even matters right now. Until NVIDIA’s RTX features are enabled in a wide swath of games the 2070’s premium over those perfectly capable GPU’s just becomes nonsensical. NVIDIA is in a tough position here since they need to increase their new series’ market share to entice developers to incorporate AI and ray tracing. However these high prices will likely keep attachment rate relatively low.

Now EVGA’s RTX 2070 XC is a different beast altogether since for 10% more than the ASUS card you get 4-6% better performance, cooler temperatures and improved acoustics. At $550 it also starts to make the $800 (!!) RTX 2080 Founder’s Edition look positively overpriced in some cases. This is an impressive card in its own right and one that makes me wonder why anyone would possibly want the $50 more expensive RTX 2070 Founders Edition.

But while I can definitely see people gravitating towards the EVGA card, that doesn’t mean it provides a good value. By and large the RTX cards haven’t improved the price to performance quotient by any perceptible amount. Remember, the GTX 1080 was launched at $550 and here we have a $500 GPU launching more than two years later that offers at most 10% better performance in today’s games.

It is only a matter of time until stock of 10-series cards have been completely expunged from retailers’ shelves and when that happens gamers who want an upgrade won’t have any choice but to turn to RTX. Competition from both Intel and AMD is a long way off. Luckily, among the current offerings the RTX 2070 is a standout for providing the best bang for buck, particularly that EVGA card. So if you absolutely want a brand new GPU, it will be a relatively safe option.

There’s no denying the problems with this launch run deep but it was nonetheless refreshing to see cards that live outside the Founders Edition sphere. NVIDIA got what they wanted: the ASUS Turbo and EVGA XC end up putting a somewhat positive spin on what could have been a very negative review cycle. But while they’re both great cards, at this point in time I don’t see any reason to recommend any RTX GPU until we can see firsthand whether those much-touted features make NVIDIA’s current premiums justifiable.