RTX 3090 Review – Gaming Benchmarks and CPU Scaling

Share:

Table of contents

Well here we are again with another NVIDIA GPU launch and this time it’s the GeForce RTX 3090. This is a card that costs $1,500 USD and it’s supposed to give you the best that Ampere can offer right now. To give you a little bit of idea what that means in terms of hardware dollars, you can pick up a Ryzen 7 3800 XT, 32GB of DDR4-3200 memory, an EVGA RTX 3080 XC3 – if you can find one of course – and still have some money left for some storage or maybe even a really nice dinner.

I want you to keep that in mind as I go through this review, because it really puts everything into perspective. Based on our RTX 3080 coverage a lot of you on Twitter and Instagram were talking about waiting for the RTX 3090 before upgrading, so this article is going to be a really honest discussion about whether or not gamers should actually make the jump.

That leads me into what we are covering. First there is the usual gaming benchmarks at 1440P and 4K. The next step is to see if the RTX 3090 is being CPU limited by re-running all the benchmarks with an overclocked processor at 4K, 1440P, and 1080P as well. Now obviously we know that the RTX 3090 isn’t designed to be a pure gaming GPU since it offers huge benefits in compute workloads, but it’s still being flogged to gamers with deep pockets. Everything seems to be pointing towards that direction because it comes bundled with a free copy of Watch Dogs: Legion and NVIDIA popped it into their GeForce gaming lineup rather than giving it a Titan branding. I also want to mention that reviews like this one weren’t allowed until orders go live, so a lot of people have already made their purchase decisions by now. That is regrettable because this video is going to be a warning against gamers actually buying the RTX 3090. Either way, we weren’t sampled the Founders Edition of this GPU, so instead we have this GIGABYTE RTX 3090 that just showed up a few days before launch.

Specs & Pricing

Let’s get started with specs, because there is a bit of a reality check right away. NVIDIA did make it obvious that this card is memory focused above all else. That is a pretty big deal for people using creative, compute, or technical applications that are VRAM limited right now. As a matter of fact, we are close to running up against some memory limitations in DaVinci Resolve ourselves, so I totally back up NVIDIA’s claim in this respect. Remember back when it was launched, the RTX 2080 Ti had some amazing performance uplifts versus the RTX 2080, since it had almost 50% more cores, 3GB of additional memory, and a wider memory interface… for 50% more money. It didn’t offer the best bang for the buck, but it was still a great GPU for gamers who had the money to burn on bragging rights.

The RTX 3090 on the other hand has only 20% more course than the RTX 3080, very similar clock speeds, but more than double the memory, and a wider memory interface. As a result, this card isn’t designed to be much faster than the RTX 3080 in games. And let me repeat that: The RTX 3090 is not meant to beat the RTX 3080 by a substantial margin, but it’s more than double the price. Meanwhile, custom cards will go for between a few dollars and a few hundred dollars more. Something like this GIGABYTE RTX 3090 Gaming OC is supposed to hit $1,580 USD, but some other versions like the ASUS ROG STRIX or the AORUS XTREME will be above $1,700 USD.

Temperatures / Frequencies / Power Consumption

The interesting thing is the Gaming OC is able to hit a constant 1980MHz while running at under 70°C. That is about a 100MHz faster than the RTX 3080 Founders Edition we have, while operating at pretty similar temperatures. Honestly, I can’t tell you enough how impressive this is since the RTX 3090 core puts out a lot more heat. However, this also brings up a pretty good point about comparisons in this review, since this GIGABYTE model will make the RTX 3090 look a bit better than it would have at stock speeds. Noise was really well managed too, but anything other than near silence would have been a failure for GIGABYTE given the sheer size of their heatsink. Interestingly, power is pretty similar between these two cards with the pre-overclocked RTX 3090 drawing at most 35W more, but don’t let this chart fool you. The RTX 3090 and RTX 3080 are two of the most power hungry GPUs of all time, and we would recommend at least a 700W power supply.

One Chunky Card

Now on camera this GIGABYTE RTX 3090 might look like a pretty normal custom GPU until you realize it’s absolutely massive, even compared to the RTX 2080 Ti version. You need to be really aware of how big it is, not just the triple slot height or the length, but the width as well. It might start off slim, but there is a huge overhang that juts out past the PCB. Another thing you will need to be aware of is the potential for GPU sag. These custom RTX 3000 series cards are big, bulky, and heavy. I know EVGA is offering anti-sag brackets with some of their cards, but GIGABYTE doesn’t. We noticed a few odd things with this GPU. First of all, GIGABYTE has used a PCB that is shorter than the heatsink, but they can be dual 8-pin power connectors closer to the cards rear end. Those are connected to the PCB extension wires. They also tried to copy NVIDIA’s airflow design by opening a section of the back plate, so one of the fans could breathe a little bit better. The problem is that those power cables actually block off a good amount of that fans airflow.

Benchmarks

With that are the way, let’s get into the benchmarks with our usual test system running at stock settings. Well these results are certainly interesting aren’t they? From the very first day NVIDIA announced these new GPUs, we said that the RTX 3090 won’t offer much of a gaming advantage over the RTX 3080 and this proves it. I also want to mention again that our GIGABYTE RTX 3090 is slightly overclocked whereas the RTX 3080 Founders Edition isn’t, so if I were to compare these cards in their stock configurations the separation would be slightly less… and even now it isn’t that much.

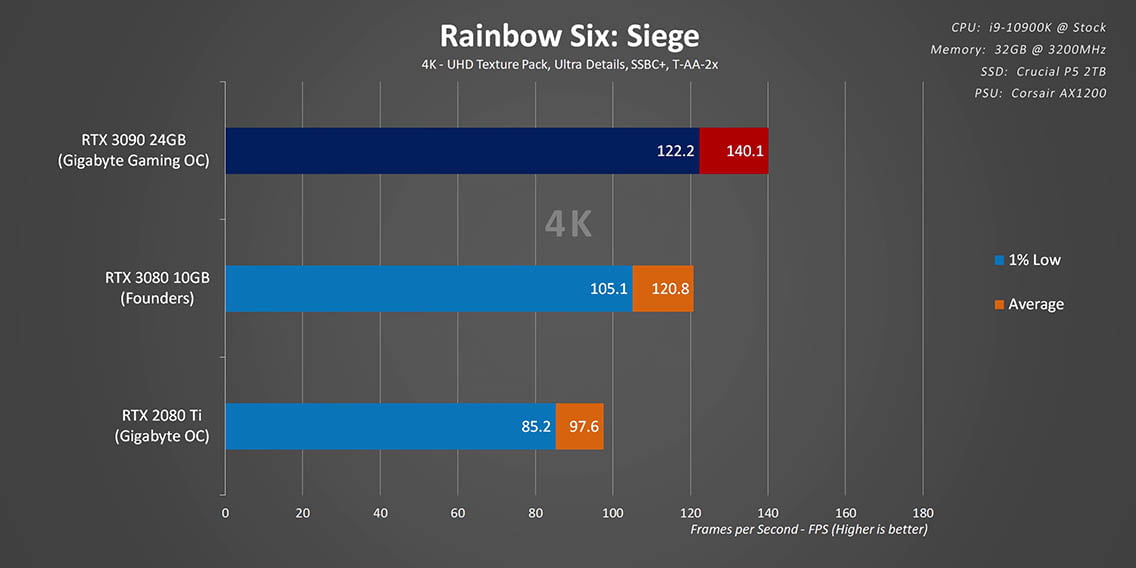

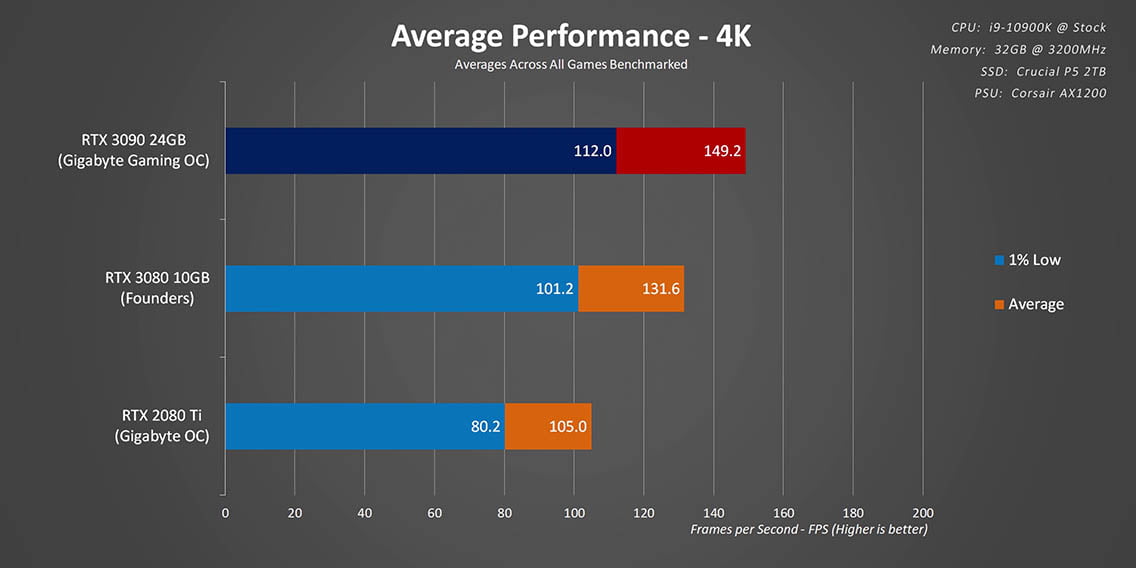

Across all the games at 1440P we only saw about an 8% overall increase in average frame rates, and even less than that with the 1% lows. One thing I do want to mention is that Jedi Fallen Order and Overwatch have built-in frame rate limiters that can’t be overridden, and it looks like the RTX 3090 hit those a few times so it’s performance has been capped a bit. Moving up to 4K removes those limits, so the RTX 3090 is now about 14% ahead on average, and it shows an 11% improvement in 99 percentile frame rates as well. The simple fact is that even at 4K and the highest detail settings games become limited by GPU processing long before they need more than 10GB of RAM or the memory bandwidth provided by the RTX 3090. You can fix the results and make this card look a lot better by throwing in 8K, but that is just marketing hype. Is anyone actually going to play at that resolution? Probably not.

CPU Bottleneck?

Like I said at the beginning, these super close results got us wondering if the RTX 3090 was being bottlenecked by the Core i9-10900K. Yes, it’s the fastest CPU available for gaming right now, but with a GPU as powerful as this one we could be running up against the limits of processor horsepower. So we went off to do more benchmarks, and this time instead of the i9-10900K running at the usual 4.8GHz to 4.9GHz in games we overclocked it to a constant 5.3GHz. Not only that, but we are adding 1080P results here too since those could really tell us if there is a processor bottleneck somewhere. I know what you are thinking, no one is going to buy a high-end GPU to play at 1080P, but I disagree because a high-end graphics card can be an interesting option for competitive gamers who play at low resolutions to get extremely fast frame rates and better reaction times.

CPU Scaling at 1080P

Kicking things off with CS:GO, and this game was obviously CPU limited in a big way since even the RTX 3080 with an overclocked CPU can actually beat the RTX 3090 in a stock system. This is also why won’t see much difference between the stock systems 1080P and its 1440P results. With our overclocked configuration it looks like we are smashing head first into a game engine limiter. Speaking of limiters, that is exactly what happens with Jedi: Fallen Order since it caps at 144FPS and Overwatch as well. By the way, Overwatch’s new patch increases the frame cap to 400FPS, so we needed to re-benchmark all the cards here. Now there are a few games with minor increases with the overclocked CPU, but for the most part both the RTX 3080 and RTX 3090 are still showing the GPU limitations still exist at 1080P.

CPU Scaling at 1440P

Moving up to 1440P brings everything even closer together, but the 5.3GHz i9-10900K positively affects the 99 percentile frame rates more than averages. Either way, other than a few exceptions, the RTX 3090 wasn’t bottlenecked by the CPU at 1080P and it certainly isn’t at 1440P either.

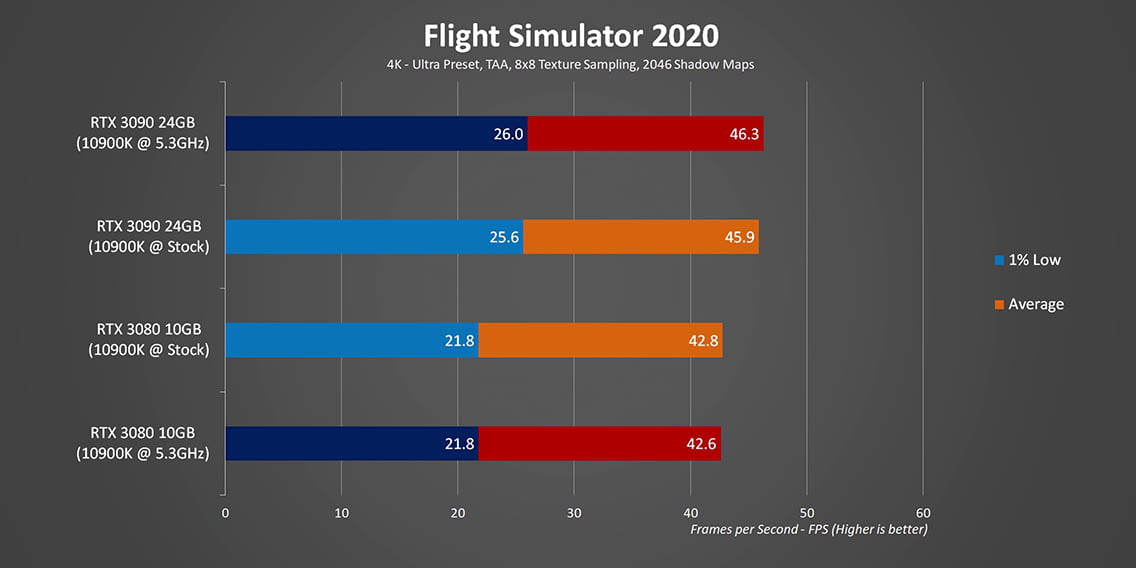

CPU Scaling at 4K

At 4K there is basically no difference between the results, and the separation between the RTX 3080 and RTX 3090 sticks to about 13% on average. That was pretty much expected since 4K puts all the emphasis on the GPU, even in more basic DX9 titles.

Conclusion

With all of that done, I think it’s time to wrap this up. There is no doubt that this GPU should be a go-to purchase for creators, digital artists, or anyone who needs access to a ton of compute horsepower. I can’t argue with that at all, since it is going to be a critical component in my own editing workflow going forward. However, like I said in the intro, there are plenty of reasons why this video was done from a gaming perspective and I’m hoping it helps some people hit the brakes before giving into the hyped up consumerism and making a really poor buying decision.

Flagship GPUs are always pretty low on the value for money scale and there is no denying it. A good example of it was the RTX 2080 Ti. Back in 2018 it costs 50% more than the RTX 2080 and it featured between 20% to 37% better performance. Now in 2020 the RTX 3080 is an amazing value – provided you can actually find one for $700 – maybe it’s price is even too low, but that’s a conversation for another time. Where the RTX 3090 slots into this discussion is a good question since it costs more than double what the RTX 3080 does while offering at the most 14% higher frame rates at 4K. At launch this makes it one of the worst values versus a less expensive alternative we have ever seen from any GeForce or Radeon GPU. Obviously past Titan cards may have been worse, but remember according to NVIDIA this isn’t a Titan, it’s a GeForce card. I guess that’s the conclusion, from a creator standpoint I’m pretty excited about the RTX 3090 and what it can bring to the table. And I’m also looking forward to popping this thing into one of my new editing workstations, but for gamers the real question is how much are you willing to pay for just 14% better FPS? NVIDIA is daring you to say double, and they need you to say it because it will guide their next steps, and that should be a very, very big red flag for everyone.